Map of custom labels that will be added to all GPU Operator managed pods. Set this value to false when using the Operator on systems The GPU operator deploys PodSecurityPolicies if enabled.īy default, the Operator deploys the NVIDIA Container Toolkit ( nvidia-docker2 stack)Īs a container on the system. Set this variable to false if NFD is already running in the cluster. A100).ĭeploys Node Feature Discovery plugin as a daemonset. Byĭefault, the MIG manager only runs on nodes with GPUs that support MIG (for e.g. The MIG manager watches for changes to the MIG geometry and applies reconfiguration as needed. See the Component Matrixįor more information on supported drivers.Ĭontrols the strategy to be used with MIG on supported NVIDIA GPUs.

Version of the NVIDIA datacenter driver supported by the Operator.ĭepends on the version of the Operator. Refer to the precompiled driver containers page for the supported operating systems. This option is available as a technology preview feature for select operating systems. When set to true, the Operator attempts to deploy driver containers that have

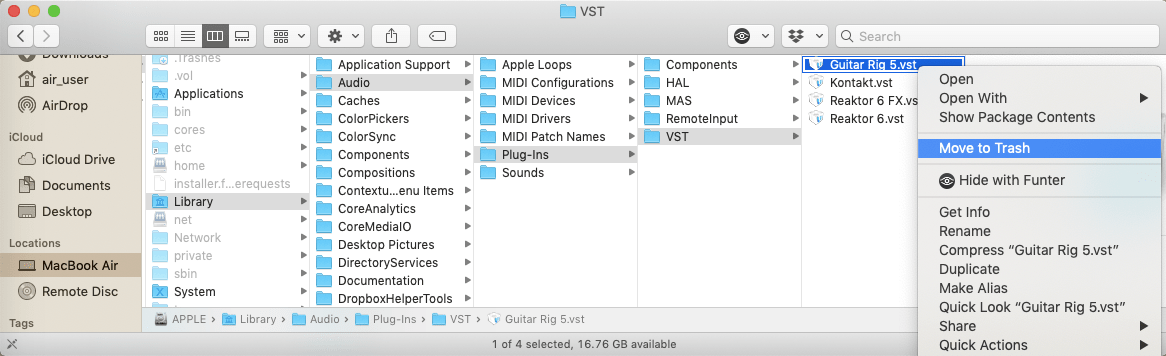

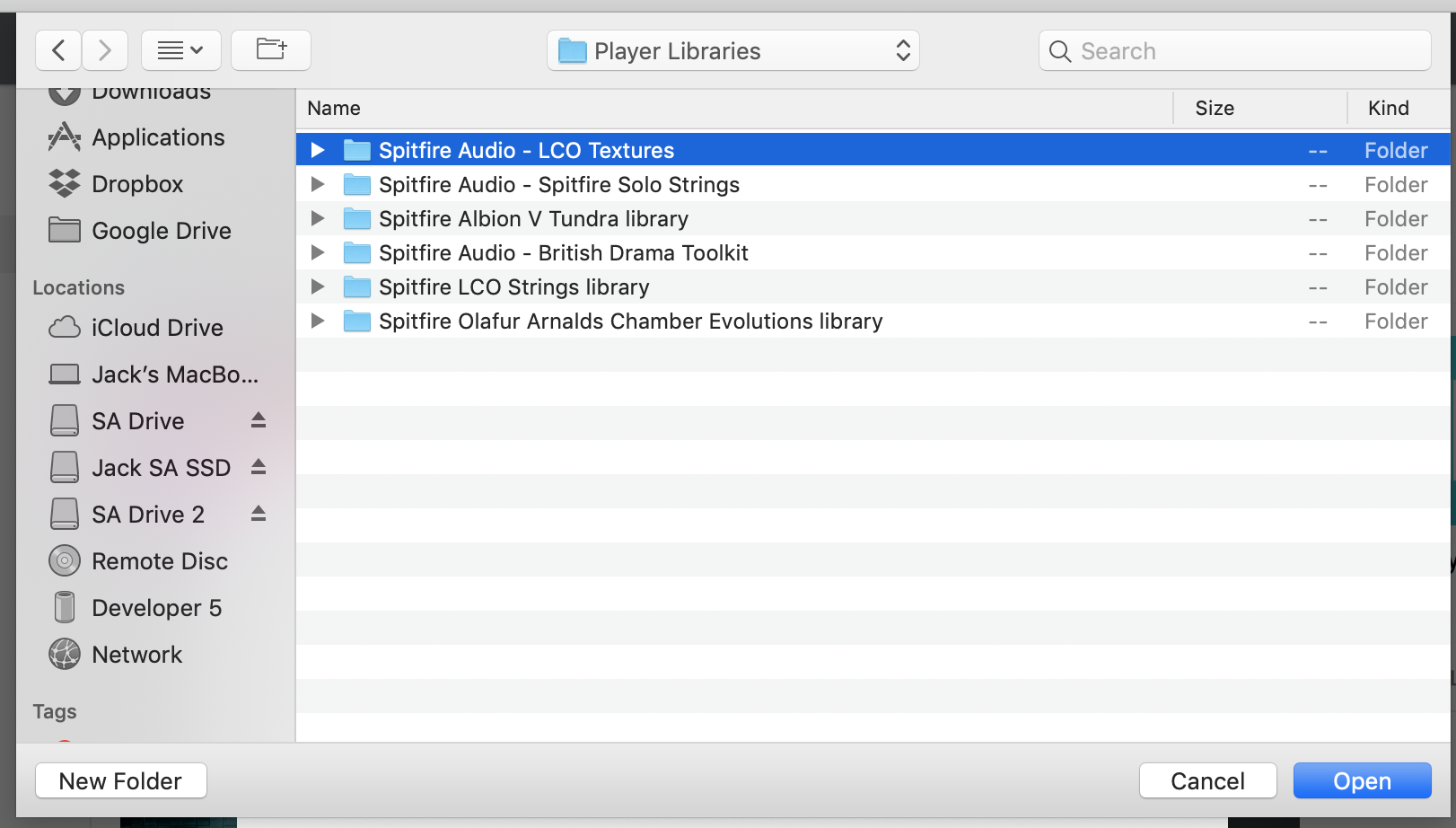

This is used to build and load nvidia-peermem kernel module. Indicate if MOFED is directly pre-installed on the host. Specify another image repository when usingĬontrols whether the driver daemonset should build and load the nvidia-peermem kernel module. Set this value to false when using the Operator on systems with pre-installed drivers. Map of custom labels to add to all GPU Operator managed pods.īy default, the Operator deploys NVIDIA drivers as a container on the system. Map of custom annotations to add to all GPU Operator managed pods. When set to true, the container runtime uses CDI to perform device injection by default. Specify nvidia-legacy to prevent using CDI to perform device injection. Pods can specify ntimeClassName as nvidia-cdi to use the functionality or Using CDI aligns the Operator with the recent efforts to standardize how complex devices like GPUsĪre exposed to containerized environments. Nvidia-cdi and nvidia-legacy, and enables the use of the Container Device Interface (CDI)įor making GPUs accessible to containers. When set to true, the Operator installs two additional runtime classes, These options can be used with -set when installing via Helm. The following options are available when using the Helm chart. Git and Docker/Podman are required to build the vGPU driver image from source repository and push to local registry. The user is required to provide these secrets to the NVIDIA GPU-Operator in the driver section of the values.yaml file. See the Kubernetes Documentation for more information. Private registry access is usually managed through imagePullSecrets. Please refer to NVIDIA vGPU Documentation for details.Ī NVIDIA vGPU License Server is installed and reachable from all Kubernetes worker node virtual machines.Ī private registry is available to upload the NVIDIA vGPU specific driver container image.Įach Kubernetes worker node in the cluster has access to the private registry. The NVIDIA vGPU Host Driver version 12.0 (or later) is pre-installed on all hypervisors hosting NVIDIA vGPU accelerated Kubernetes worker node virtual machines. To enable the KubeletPodResources feature gate, run the following command: echo -e "KUBELET_EXTRA_ARGS=-feature-gates=KubeletPodResources=true" | sudo tee /etc/default/kubeletīefore installing the GPU Operator on NVIDIA vGPU, ensure the following. From Kubernetes 1.15 onwards, its enabled by default. If NFD is already running in the cluster prior to the deployment of the operator, then the Operator can be configured to not to install NFD.įor monitoring in Kubernetes 1.13 and 1.14, enable the kubelet KubeletPodResources feature By default, NFD master and worker are automatically deployed by the Operator. Node Feature Discovery (NFD) is a dependency for the Operator on each node. Nodes must be configured with a container engine such as Docker CE/EE, cri-o, or containerd. This document provides instructions, including pre-requisites for getting started with the NVIDIA GPU Operator.įor installing the GPU Operator on clusters with Red Hat OpenShift using RHCOS worker nodes,įor installing the GPU Operator on VMware vSphere with Tanzu leveraging NVIDIA AI Enterprise,įollow the NVIDIA AI Enterprise document.įor getting started with NVIDIA GPUs for Google Cloud Anthos, follow the getting startedīefore installing the GPU Operator, you should ensure that the Kubernetes cluster meets some prerequisites. Integrating GPU Telemetry into Kubernetes.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed